Radiology AI Defense Documentation & Error Reporting

A defensibility and documentation layer for human‑AI diagnostic environments — built by a radiologist who knows the medicolegal terrain.

AI has changed how clinical judgement is formed.

Governance hasn't adapted to that reality.

...

RADDER provides the visibility institutions need to oversee AI‑mediated practice responsibly.

Preserve the evidence. Protect the clinician. Strengthen the institution.

What RADDER Is

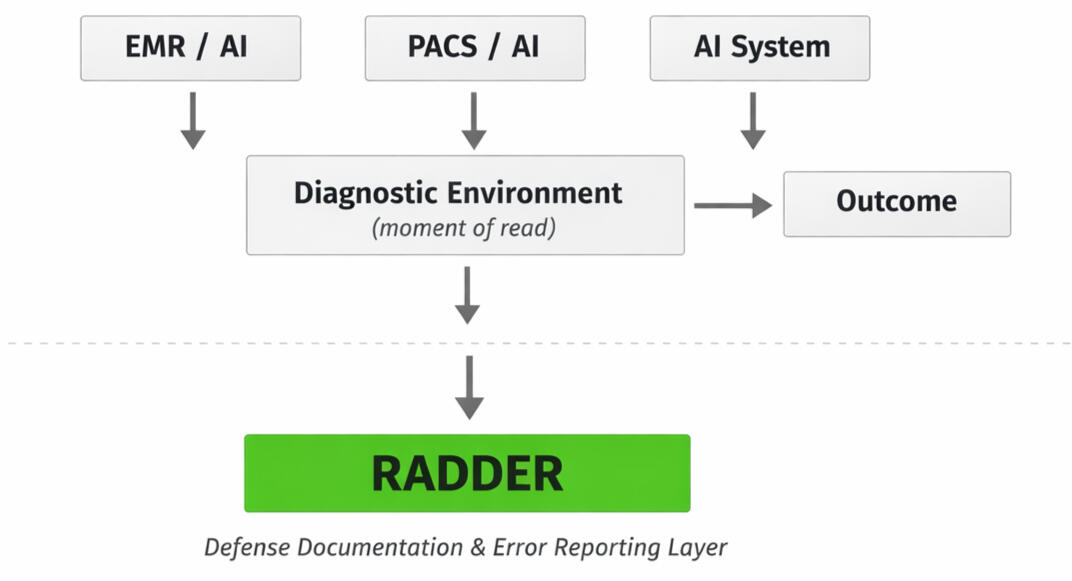

RADDER is a governance framework that makes the cognitive conditions of AI‑assisted diagnosis visible.

It documents how AI shapes perception, workflow, and judgment — the influences that normally disappear and leave clinicians accountable for system‑level behavior they cannot see or reconstruct.By preserving the evidentiary substrate of human‑AI interaction, RADDER enables decisions to be defended, audited, and governed responsibly.

It restores context, protects clinicians, and gives institutions the visibility they need to manage AI‑mediated risk.

What RADDER Evaluates

RADDER evaluates AI‑assisted diagnostic environments across seven governance domains:

Explanability Index

Human - AI Concordance

Workflow Integration Risk

Error Severity Weighting

Legal Defensibility Score

Cognitive Load & Dependence Signal

Audit Trail Completeness

Why Radder Exists

AI is accelerating faster than the systems meant to oversee it.

Radiologists are now interpreting inside environments shaped by prompts, interfaces, model outputs, and workflow pressures that leave no durable record of what actually happened at the moment of diagnosis.When outcomes diverge — when pathology contradicts imaging, when clinical courses shift, when litigation arises — the diagnostic environment has already vanished.The AI’s suggestion is gone.

The interface state is gone.

The cognitive pressures are gone.Only the radiologist remains, expected to defend a decision made inside a system that left them no evidence....RADDER exists to fix that.It preserves the evidentiary substrate of human‑AI interaction: the cues shown, the cues ignored, the workflow context, the downstream truth, and the metadata that normally disappears.

This protects clinicians from hindsight bias, protects institutions from governance gaps, and creates a fair, reconstructable record of how decisions were shaped.RADDER is about ensuring that as AI accelerates, humans aren’t left holding the liability for invisible system behavior.Built by a radiologist who knows the medicolegal terrain, RADDER exists because accountability should never depend on memory, guesswork, or retroactive blame.It should depend on evidence — and RADDER preserves it.

Who RADDER Protects

Radiologists

RADDER preserves the diagnostic environment so radiologists are not judged by hindsight or by system behavior that left no trace. It documents what was shown, what was ignored, what was reasonable, and what pressures shaped the read — creating a defensible record when outcomes diverge.

Institutions

Hospitals deploying AI carry governance obligations they cannot meet with disappearing prompts, opaque interfaces, and undocumented influence. RADDER protects institutions by preserving the evidence needed to demonstrate responsible oversight, fair workflows, and accountable system design.

Patients

Patients deserve diagnostic systems that are transparent, auditable, and safe. By documenting the human‑AI interaction and the conditions under which decisions are made, RADDER strengthens the reliability of the diagnostic process and reduces the risk of unrecognized system‑level errors.

The Diagnostic System Itself

AI‑assisted medicine cannot mature without a way to understand how humans and systems influence each other. RADDER protects the integrity of the diagnostic ecosystem by creating the auditability, traceability, and context that modern workflows lack.

(c) RADDER, LLC. All rights reserved.

>>DOWNLOAD NOW:<< $75

A RADDER Institutional Overview (2026)

The AI‑Mediated Risk Brief for Radiology Leadership is a concise operational guide that explains how AI alters diagnostic workflow, perceptual attention, and institutional risk. It gives chairs, QI committees, and hospital leadership the structure and language needed to recognize AI‑mediated failure modes, evaluate vendor claims, and identify governance gaps before deployment. The brief clarifies risk without adding cognitive burden to radiologists.

Coming soon...

The "Augmented" Radiologist:

A conceptual guide examining how AI reshapes diagnostic reasoning and institutional accountability. This work will be available after its peer‑reviewed companion article is published.

A governance framework for understanding how AI reshapes the cognitive conditions of radiologic judgment — and why existing accountability structures still treat judgment as if nothing has changed. This guide names the invisible workflow drift, perceptual asymmetry, and institutional risk‑shifting that define modern AI‑assisted practice.

Coming soon...

RADDER Framework PDF

A formal governance framework for documenting the cognitive conditions of AI‑assisted diagnosis. The full framework will be released following peer‑reviewed publication.

RADDER is a governance framework that makes the cognitive conditions of AI‑assisted diagnosis visible. It documents how AI systems shape perception, workflow, and judgment, and it preserves the contextual substrate that normally disappears once a case is closed. By reconstructing the reasoning environment in which decisions are made, RADDER restores institutional visibility, protects clinicians, and provides a defensible structure for managing AI‑mediated risk.

(c) RADDER, LLC. All rights reserved.

About the Founder

Chenara A. Johnson, MD, PhD

Clinical Assistant Professor of Emergency & Trauma Radiology

Founder, RADDERChenara Johnson is an emergency and trauma radiologist with an MD/PhD in Bioengineering and advanced training in imaging AI, high‑acuity diagnostics, and governance‑aligned technology assessment. Her work focuses on the cognitive and operational conditions under which clinicians practice in AI‑mediated environments — and the institutional blind spots that arise when governance does not adapt to those conditions.She holds the RSNA Advanced Certificate in Imaging AI and the RSNA Emergency Radiology AI Certificate, reflecting specialized expertise at the intersection of acute care imaging and machine‑assisted diagnostic workflows. Her background spans biomedical engineering, device development, medicolegal analysis, and quality oversight across high‑acuity clinical settings.Through RADDER, she develops governance‑forward frameworks that make the cognitive conditions of AI‑assisted diagnosis visible. Her work centers on restoring institutional context, strengthening defensibility, and supporting clinicians practicing within increasingly complex diagnostic systems.

(c) RADDER, LLC. All rights reserved.

For inquiries related to RADDER, upcoming publications, or institutional interest...

CONTACT US:

(c) RADDER, LLC. All rights reserved.

Coming soon...